Neurosurgeons have a tough balancing act when removing brain tumors: Fail to remove the entire tumor, and the cancer will almost certainly return. But if they remove too much healthy brain in the tumor margins, patients can suffer a host of neurological deficits. Complicating their task even further: Healthy and cancerous brain tissues are often visually indistinguishable midsurgery, even with state-of-the-art tools, including a surgical microscope and intraoperative MRI/CT.

Neurosurgeons have a tough balancing act when removing brain tumors: Fail to remove the entire tumor, and the cancer will almost certainly return. But if they remove too much healthy brain in the tumor margins, patients can suffer a host of neurological deficits. Complicating their task even further: Healthy and cancerous brain tissues are often visually indistinguishable midsurgery, even with state-of-the-art tools, including a surgical microscope and intraoperative MRI/CT.

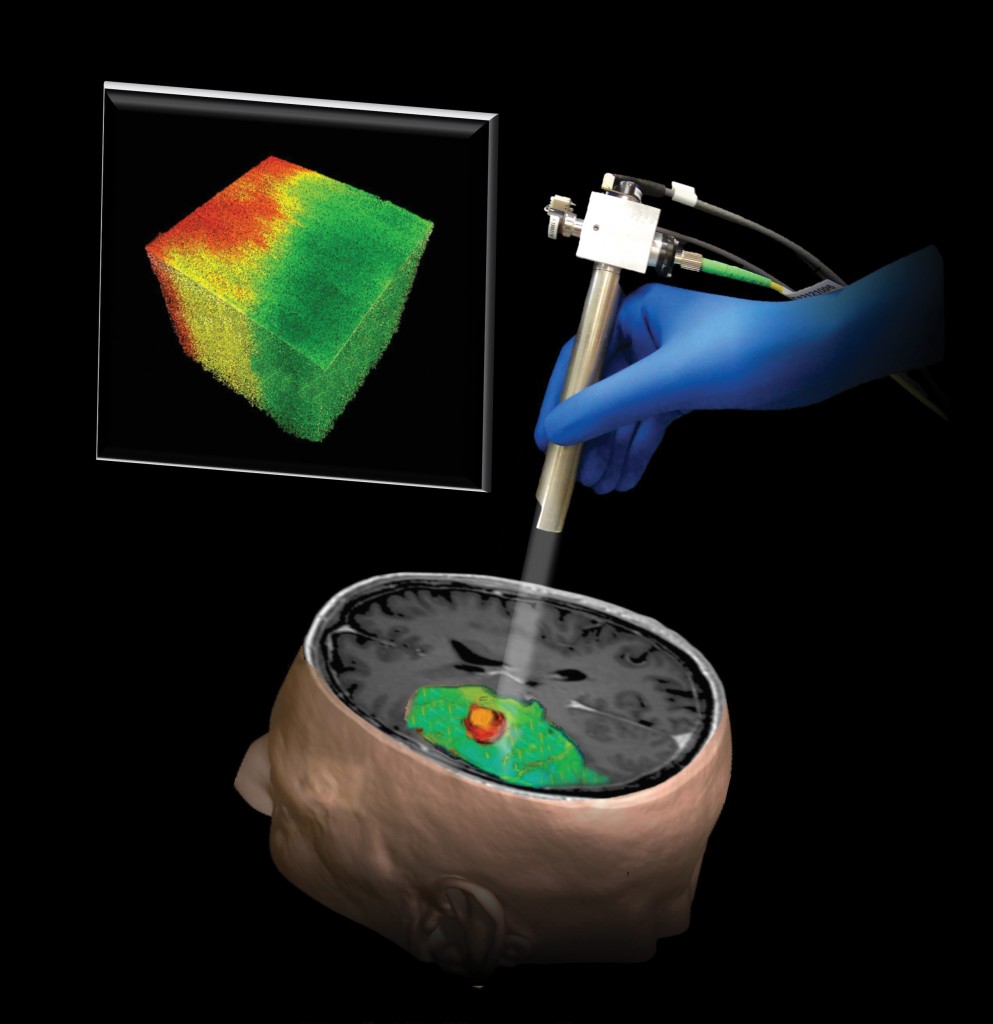

Carment Kut ’08, PhD ’15, was sure that there had to be a better way. An MD/PhD candidate at both the Johns Hopkins University School of Medicine and the Whiting School of Engineering—mentored by Xingde Li and Elliot McVeigh—Kut’s strong interest in medical technology and imaging led her to optical coherence tomography (OCT).

OCT is an echo technique akin to ultrasound imaging, with both techniques utilizing imaging reflections from within tissues to create pictures. Because light travels much faster than sound, however, OCT’s resolution is much higher than ultrasound’s.

Kut suspected that OCT might make it possible to distinguish between healthy brain and tumor tissue, but she couldn’t know for sure without testing on samples. Fortunately, Alfredo Quinones-Hinojosa, who directs the Brain Tumor Surgery Program at Johns Hopkins Bayview Medical Center, offered to supply Kut with freshly resected tumor samples from the operating room. Almost immediately, Kut noticed that the tumors had a different signature in the laboratory setting. However, finding a way to use this signature directly in patients during surgery was a challenge.

Two years later, she and Li hit on an ideal solution by creating a real-time, color-coded optical property map that gives surgeons direct visual cues in detecting brain cancer while in the operating room. These results, published in the June 2015 Science Translational Medicine, appear to have excellent sensitivity and specificity.

The next step: Conducting the first inpatient studies using OCT and optical property mapping.